bagging resampling vs replicate resampling | bootstrapping vs resampling bagging resampling vs replicate resampling Formally, a ranking of m items, labeled \((1,\dots , m)\), is a mapping a from the set of items \(\{1,\dots , m\}\) to the set of ranks \(\{1,\dots , m\}\). When all items are . See more NOSOTROS. Canguro nació para satisfacer las necesidades en el área tecnológica y todas sus ramas, con una expansión en todo el territorio venezolano, con 20 años en el mercado ha hecho que innovemos a los clientes mayoristas y al detal. Seguimos saltando y creando nuevos servicios para ti para brindarte la mejor atención y asesoría que .

0 · statistical resampling methods

1 · statistical resampling

2 · bootstrapping vs resampling

The Canon LV-7320E projector lamp with module is a genuine original replacement part for specific Canon projectors. It has a UHP OEM Genuine Original Lamp Inside*. The lamp provides 150 watts of power and an average life of 2000 hours. The Canon LV-7320E projector lamp with module is designed to replace bulbs in numerous Canon projectors.

Preference data can be found as pairwise comparisons, when respondents are asked to select the more preferred alternative from each pair of alternatives. Note that paired comparison and ranking methods, especially when differences between choice alternatives are small, impose lower constraints on the response . See more

Formally, a ranking of m items, labeled \((1,\dots , m)\), is a mapping a from the set of items \(\{1,\dots , m\}\) to the set of ranks \(\{1,\dots , m\}\). When all items are . See moreA natural desiderata is to group subjects with similar preferences together. To this end, it is necessary to measure the spread between rankings through . See more

Two-part answer. First, definitorial answer: Since "bagging" means "bootstrap aggregation", you have to bootstrap, which is defined as sampling with replacement. Second, . Next steps. This article describes a component in Azure Machine Learning designer. Use this component to create a machine learning model based on the decision .

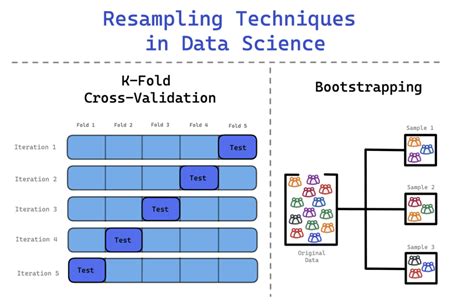

In statistics, resampling is the creation of new samples based on one observed sample. Resampling methods are: 1. Permutation tests (also re-randomization tests)2. Bootstrapping3. Cross validationThe idea is of adaptively resampling the data • Maintain a probability distribution over training set; • Generate a sequence of classifiers in which the “next” classifier focuses on sample where . These techniques, while distinct in their applications, both harness the power of resampling to enhance the stability and predictive performance of models. In this essay, we will delve into the concepts of bootstrapping and . Common machine learning resampling methods like bootstrapping and permutation testing attempt to describe how reliably a given sample represents the true population by taking multiple sub-samples.

4.1 Introduction. In this chapter, we make a major transition. We have thus far focused on statistical procedures that produce a single set of results: regression coefficients, .

Inspired by the success of supervised bagging and boosting algorithms, we propose non-adaptive and adaptive resampling schemes for the integration of multiple . Q3. How to solve class imbalance problem? A. There are several ways to address class imbalance: Resampling: You can oversample the minority class or undersample the . We briefly outline the main difference between bagging and boosting, the ensemble methods we are going to work with. Bagging (Section 4.1) learns decision trees for many datasets of the same size, randomly drawn with replacement from the training set. Thereafter, a proper predicted ranking is assigned to each unit.

Two-part answer. First, definitorial answer: Since "bagging" means "bootstrap aggregation", you have to bootstrap, which is defined as sampling with replacement. Second, more interesting: Averaging predictors only improves the .

Next steps. This article describes a component in Azure Machine Learning designer. Use this component to create a machine learning model based on the decision forests algorithm. Decision forests are fast, supervised ensemble models. This component is a good choice if you want to predict a target with a maximum of two outcomes.In statistics, resampling is the creation of new samples based on one observed sample. Resampling methods are: Permutation tests (also re-randomization tests) Bootstrapping; Cross validation; JackknifeThe idea is of adaptively resampling the data • Maintain a probability distribution over training set; • Generate a sequence of classifiers in which the “next” classifier focuses on sample where the “previous” clas sifier failed; • Weigh machines according to their performance. Sampling with replacement is not required. Two issues come up when you use subsampling without replacement instead of the usual bootstrap samples: 1. You must determine what sub-sample size to use, and 2. Out of bag observations are no .

These techniques, while distinct in their applications, both harness the power of resampling to enhance the stability and predictive performance of models. In this essay, we will delve into the concepts of bootstrapping and bagging, exploring their principles, advantages, and real-world applications. Common machine learning resampling methods like bootstrapping and permutation testing attempt to describe how reliably a given sample represents the true population by taking multiple sub-samples.All three are so-called "meta-algorithms": approaches to combine several machine learning techniques into one predictive model in order to decrease the variance (bagging), bias (boosting) or improving the predictive force (stacking alias ensemble).

how take apart and fix chanel bottle

4.1 Introduction. In this chapter, we make a major transition. We have thus far focused on statistical procedures that produce a single set of results: regression coefficients, measures of fit, residuals, classifications, and others. There is but one regression equation, one set of smoothed values, or one classification tree. We briefly outline the main difference between bagging and boosting, the ensemble methods we are going to work with. Bagging (Section 4.1) learns decision trees for many datasets of the same size, randomly drawn with replacement from the training set. Thereafter, a proper predicted ranking is assigned to each unit. Two-part answer. First, definitorial answer: Since "bagging" means "bootstrap aggregation", you have to bootstrap, which is defined as sampling with replacement. Second, more interesting: Averaging predictors only improves the . Next steps. This article describes a component in Azure Machine Learning designer. Use this component to create a machine learning model based on the decision forests algorithm. Decision forests are fast, supervised ensemble models. This component is a good choice if you want to predict a target with a maximum of two outcomes.

In statistics, resampling is the creation of new samples based on one observed sample. Resampling methods are: Permutation tests (also re-randomization tests) Bootstrapping; Cross validation; JackknifeThe idea is of adaptively resampling the data • Maintain a probability distribution over training set; • Generate a sequence of classifiers in which the “next” classifier focuses on sample where the “previous” clas sifier failed; • Weigh machines according to their performance. Sampling with replacement is not required. Two issues come up when you use subsampling without replacement instead of the usual bootstrap samples: 1. You must determine what sub-sample size to use, and 2. Out of bag observations are no .

These techniques, while distinct in their applications, both harness the power of resampling to enhance the stability and predictive performance of models. In this essay, we will delve into the concepts of bootstrapping and bagging, exploring their principles, advantages, and real-world applications. Common machine learning resampling methods like bootstrapping and permutation testing attempt to describe how reliably a given sample represents the true population by taking multiple sub-samples.All three are so-called "meta-algorithms": approaches to combine several machine learning techniques into one predictive model in order to decrease the variance (bagging), bias (boosting) or improving the predictive force (stacking alias ensemble).

statistical resampling methods

Only at Sweetwater! 0% Financing and FREE Shipping for your Canare LV-61S 75 ohm Video Coaxial Cable Blue! Bulk Coaxial Cable with Braided Shield - Blue (800) 222-4700 Talk to an expert!Suitable for Inclement Weather. The Canare LV-61S Video Coaxial Cable (500’ / Black) is a flexible RG59B/U equivalent cable suitable for interfacing with video equipment, making it useful in video patch cord assembles and general ENG work. The cable utilizes a smooth, non-glare Black finish PVC jacket. The cable retains its flexibility .

bagging resampling vs replicate resampling|bootstrapping vs resampling